It’s hard to escape the perceived ‘threat’ of Artificial Intelligence – we are surrounded by stories warning us that our jobs are going to be taken by robots, that AI’s are horrendously unethical and un-PC and that bit by bit other human aspects of our lives are going to be taken over by hyper-intelligent machines

But in reality, advancements in AI are actually contributing to our own creative development – and a great example of this is the emergence of Style Transfer techniques as a way to advance our own creativity and aid us in the creative process.

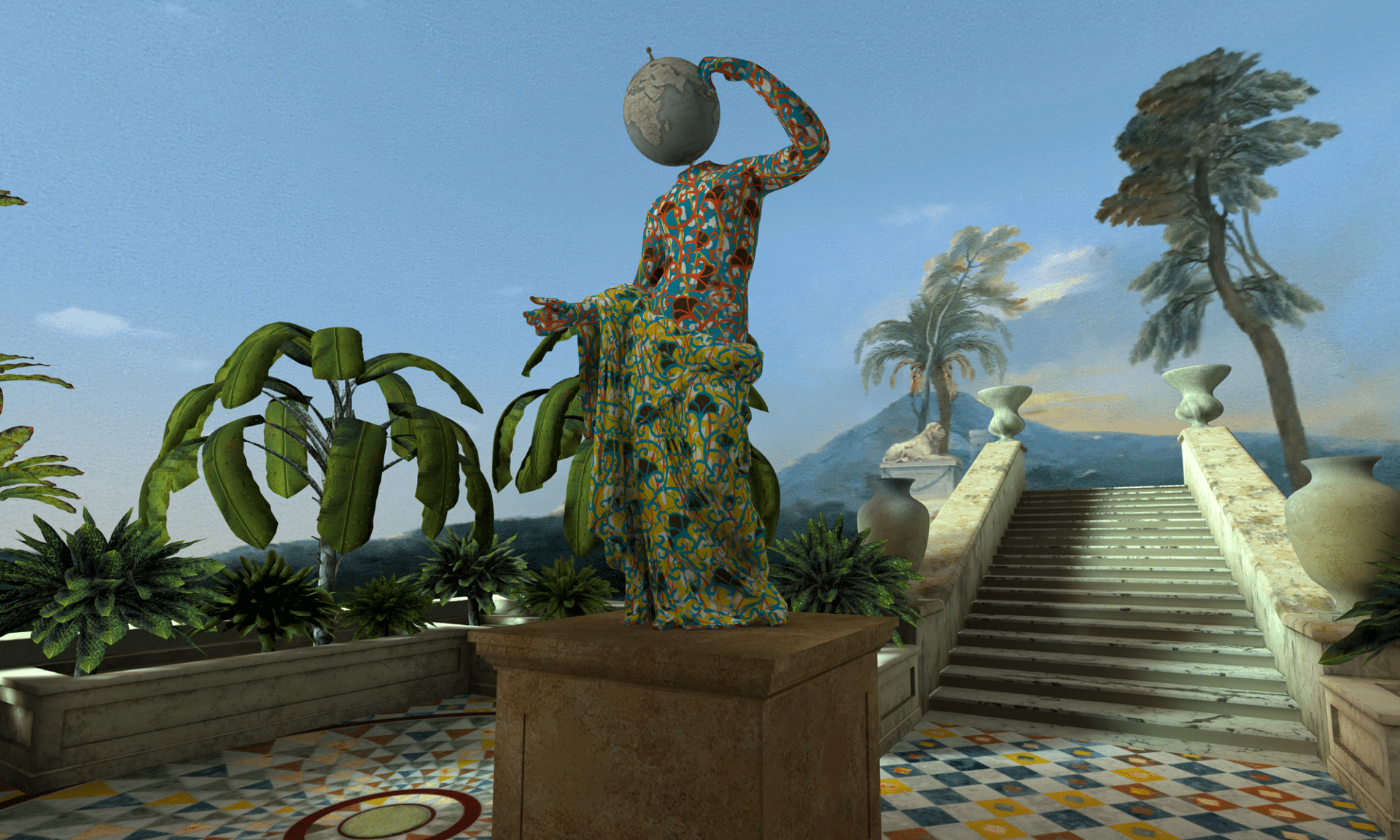

We’ve successfully incorporated pioneering Style Transfer techniques into our CG and VFX pipeline at Happy Finish. We recently created a clever VR experience for the Royal Academy in collaboration with renowned artist Yinka Shonibare MBE which uses AI to produce a higher quality output. Our VR experience for the RA essentially allows the viewer to step into a 3D version of a Neoclassical painting by Gavin Hamilton and explore, travelling out into a courtyard to discover Yinka’s latest statue in VR form. We applied Style Transfer techniques based on Deep Learning Neural Networks to mimic the Neoclassical painter’s style and fill in the gaps of the 3D scene when the only reference we had was the front-facing 2D painting. We used this technique both to texture the backs of the characters and also to add new elements to the environment, such as plants, the floor and the staircase. Check out how this technique works in the video below:

Style Transfer techniques became popular with Prisma and other mobile applications that allow the users to apply the style of paintings and pictures in their photos. Despite the diffusion of these pictures, there are some big challenges in terms of quality, speed and size of the results that remain to be solved. For the Royal Academy, we found it challenging to recreate the 3D assets in the scene whilst respecting the style of Gavin Hamilton and other Neoclassical painters, and for this purpose the Style Transfer technique was immensely helpful. We selected Neoclassical paintings as a data set and masked the parts of the images that contained plants and the other elements we needed. Then, we fed the AI system with the generic “content” textures (those that we wanted to transform), the image masks (to correctly assign the different parts of the content images to the style) and the “style” images. For each content-style pair of images, the AI system – based on Deep Convolutional Neural Networks – learned how to recreate the content image by using the visual features contained in the style image.

The impact of Deep Learning-based techniques on the CGI and VFX pipeline will be huge. Not only by using Style Transfer techniques, but also by incorporating other techniques such as Image-to-Image Translation (based on Generative Adversarial Networks) or Super-Resolution – they will completely disrupt the way that assets are generated. For example, we are already using Super-Resolution when we need images of a larger size (2x – 4x the size) whilst preserving the original quality. The Style Transfer technique will give us the ability to automate processes that are costly and need many hours of human-work, sometimes without also having the certainty of the final result. Image-to-Image Translation will give us the ability to apply automatically sophisticated VFX in real-time.

We’re now able to use AI to support artists and automate a range of creative processes – but in the future, possibly ten or so years from now, the “artificial imagination” will revolutionise the entire CGI and VFX industry as we know it. We’re excited to now be using Artificial Intelligence to better our creative work, and our confidence in being able to incorporate AI into our CG and VFX pipeline opens up lots of possibilities for the future.

Got an AI project coming up and want to test our capabilities?